I’m hitting the phase in my residency now where it’s time to start looking ahead to the future– and the future, right now, means documentation.

For the past several months, I’ve been responsible for designing and operating the process of ingesting AAPB drives crammed chock-full of unique video content onto LTO tape. Across the way, I’ve hit a number of the inevitable roadblocks and complications, and had to adjust my tools and strategy accordingly. That’s all to be expected, but what happens when someone else has to pick up where I left off? Although I’ve made all my decisions in consultation with the team and my mentors at WGBH, there are several nitty-gritty details about the usage of the scripts I’ve built and the workflow I’ve developed – and, especially, the way that it’s changed over time — that right now exist only in my head. While I’ve done some documentation along the way, at this point it’s time for me to take a hard look at my notes and make sure they’re as clear as possible so that the next person who comes along (and the next after that, and the next after that) can base their future preservation decisions on solid data that’s fully understood.

….and when I sat down to start doing that, I remembered that while I’d promised in a previous entry to share my workflow for the AAPB ingest on this blog, I’d also never actually done that either. So here goes! (Fair warning, this may get long.)

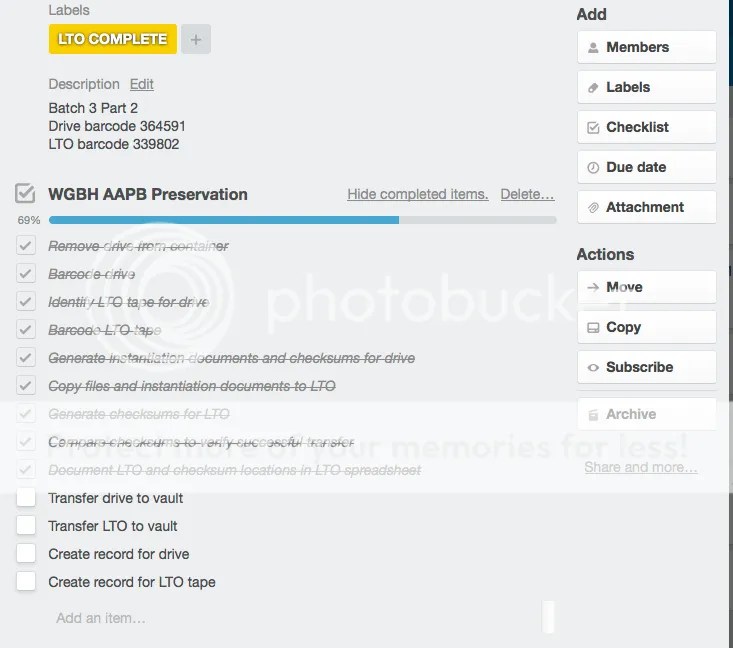

This is a picture of one of my standard Trello checklists for the American Archive drives that I’m processing. I developed the checklist pretty early in the process. Looks pretty straightforward, right? Let me go through and provide a little more context.

- Remove drive from container – we decided early on to remove all the drives from their cases and store them in anti-static bags, so that we could cut down on the space used to store them in the archive and maintain a standardized workflow for retrieval. However, as we began to implement this procedure, it turned out that some of the Seagate drives that were sent out in the first batch wouldn’t read back successfully in any container except the one that they originally lived in – so the workflow had to be adapted for these drives, which are going to live in a different location.

- Barcode drive – and, of course, our barcoding protocol was based on naked drives that lived in anti-static bags in a drawer, not drives that lived in cases in boxes on shelves, so we had to come up with an alternate protocol for barcoding those problem drives as well

- Identify LTO tape for drive – although initially we were operating on a ratio of 1 drive:1 LTO tape, several of the drives that were sent out contained 3 TB of material – too much to fit on one LTO tape.

- Barcode LTO tape – here’s one thing that’s remained constant! One LTO tape, one barcode, no problem.

- Generate instantiation documents and checksums for drive – here maybe you’re thinking, ‘she broke out ‘barcode LTO tape’ into its own checklist item, and all this just counts as one step?’ But yes, for my workflow purposes, it is one step! Though it took a little while to make it that way. One of my first steps when I started this project was to write a bash script that went through each drive and used the output from mediainfo’s PBCore 2.0 option as a framework to build a more detailed PBCore instantiation record. The instantiation record includes all the annotations that a technical metadata generator wouldn’t output but WGBH needs to know as an institution, like the various different unique IDs associated with the item, the location of any copies, and, of course, the MD5 checksum. The completed record lives with the file, and is also automatically duplicated into a folder on shared storage for ingest into the metadata repository. When WGBH’s DAM system becomes fully operational, a hack like this script shouldn’t be necessary, but for the time being, it allows us to keep track of the key information about the files we’re processing. The script would have to be modified for adoption into any other repositories, because it relies on a lot of assumptions about the specific way file structures and barcoding systems are set up at WGBH, but you can check out the latest version on github: https://github.com/WGBH/ltoscripts/blob/master/AA_pbcorescript

- Copy files and instantiation documents to LTO – I use the rsync network transfer protocol for this – it’s frequently used in archives for its speed, reliability, and capability to preserve archival information like timestamps and permissions. Although it could theoretically be automated, I tend to do it by hand as a check to make sure I’m not accidentally over-filling an LTO tape and that no files are slipping through the cracks.

- Generate checksums for LTO – yep, this one’s another script, a very basic one just to make sure that the checksum document that’s generated looks the same as the checksum list created in the list above, so that I can …

- Compare checksums to verify successful transfer – I just use the simple Linux ‘diff’ command for this, which compares two documents and yells if there are any differences between them. If everything’s gone well, in theory there should be no difference. In practice, things like splitting one drive up between LTO tapes can throw this off and make it more complicated – my scripts aren’t yet smart enough to account for things like that, so there have been several times when I need to go back and double-check my data when things aren’t looking right. For the record, only once so far have I caught a file that became corrupted in transfer – but I did catch one once! And this process ensures that, in the future, if a file becomes corrupted on tape or in being recovered from tape, we’ll know. (Trust me – coming off my other digital forensics project, this is really relevant.)

- Document LTO and checksum locations in LTO spreadsheet – this is a shared document that tracks of what data lives on what LTO tape and where all the metadata about that data is hanging out. Again, the final implementation of our HydraDAM system will hopefully make this obsolete eventually, but for now we have to keep manually tracking this kind of meta-metadata.

- Transfer drive to vault – in theory, this is one of the easy parts! In practice, when there are questions – like “where ARE we going to store those drives that we couldn’t take out of their cases?” – this is the step that tends to get put off the longest.

- Transfer LTO to vault – however, all the work I’ve done is really of no use if the tapes and the drives don’t end up where they belong and where they can be found again

- Create record for drive

- Create record for LTO tape – “but didn’t you already talk about how you recorded this information in a spreadsheet?” Yes, but the spreadsheet is for internal use within the Media, Library and Archives, whereas this record goes into the broader WGBH-wide database system so that employees to request the drive or the LTO tape if they need to recover something from it.

So that’s the basic workflow – which doesn’t take into account special circumstances that apply only to some drives. For example, a certain number of drives have known failed files on them, which are listed in a separate Excel sheet; I’ve modified my scripts to include a process which pulls those files and move them to separate folders so they don’t take up space on an LTO.

This initial workflow also leaves out a number of smaller QC procedures that I’ve learned to include over time, after stumbling over problems I hadn’t expected. For example, after discovering that some failed files and non a/v-files had been accidentally included in the collection, I started running searches on each batch of PBCore records that I generated to identify any that don’t have some kind of audio or video essence track. Recently, we also discovered that some of the files that we’ve been ingesting are low-quality derivatives that we probably shouldn’t be preserving, so I’m currently in the process of modifying my scripts again to try and weed those extra files out at the pass as well. One of the results of that is that the drives I’m processing now end up looking a little bit different than the drives I was processing at the beginning of the project, when I hadn’t figured all of that out yet.

Whether or not you read through all of those workflow steps, the point I’m trying to get at here is that in my experience workflow is never really ‘basic,’ and it’s never static. It’s constantly changing to take into account new circumstances you didn’t anticipate, and there’s no way to get around that. As archivists, the material that we’re preserving just isn’t a series of square pegs; there’s always going to be that one item that throws the whole plan off-kilter. That’s why it’s so vital to make sure you keep documenting what you’re doing, so that if someone in the future is wondering why the material or metadata they’re looking at suddenly looks a little different than it did before, they have a clear and easy answer as to why, how, and what to do about it.

— Rebecca, signing off, and diving back into documentation!